Abstract

LLM-based agents are being deployed for autonomous penetration testing,

but nobody has solved the constraint problem. These agents run on

system-prompt "guardrails" that don't actually guard anything. Research

shows agents take risky actions 23.9% of the time even with explicit

safety instructions. GPT-4 misses 27.5% of risky situations entirely.

ROE Gate is the first reference monitor built for this problem: out-of-band

evaluation, cryptographic action signing, and an isolated judge LLM that

can't be prompt-injected by the agent it's evaluating.

The Problem: Prompt-Based Safety Is Not Enforcement

Current approaches to constraining AI pentest agents all fail in practice:

System Prompt Instructions.

Telling the model "do not scan out-of-scope targets" gives you zero enforcement.

The model ignores these instructions under prompt injection, context window

overflow, or just because "being helpful" wins out over "follow the rules."

Output Filtering.

Content filters check model outputs after the fact, but they can't stop

tool execution. By the time the filter flags something, the agent already

ran the command.

Self-Critique / Constitutional AI.

Having the same model evaluate its own actions is circular. If the model

gets prompt-injected, the self-critic is just as compromised. Same model,

same context, no isolation.

General-Purpose Policy Engines.

Systems like OPA/Rego can evaluate policies but know nothing about LLM agent

actions, can't semantically evaluate edge cases, and have no cryptographic

binding between policy approval and tool execution.

The ROE Gate Architecture

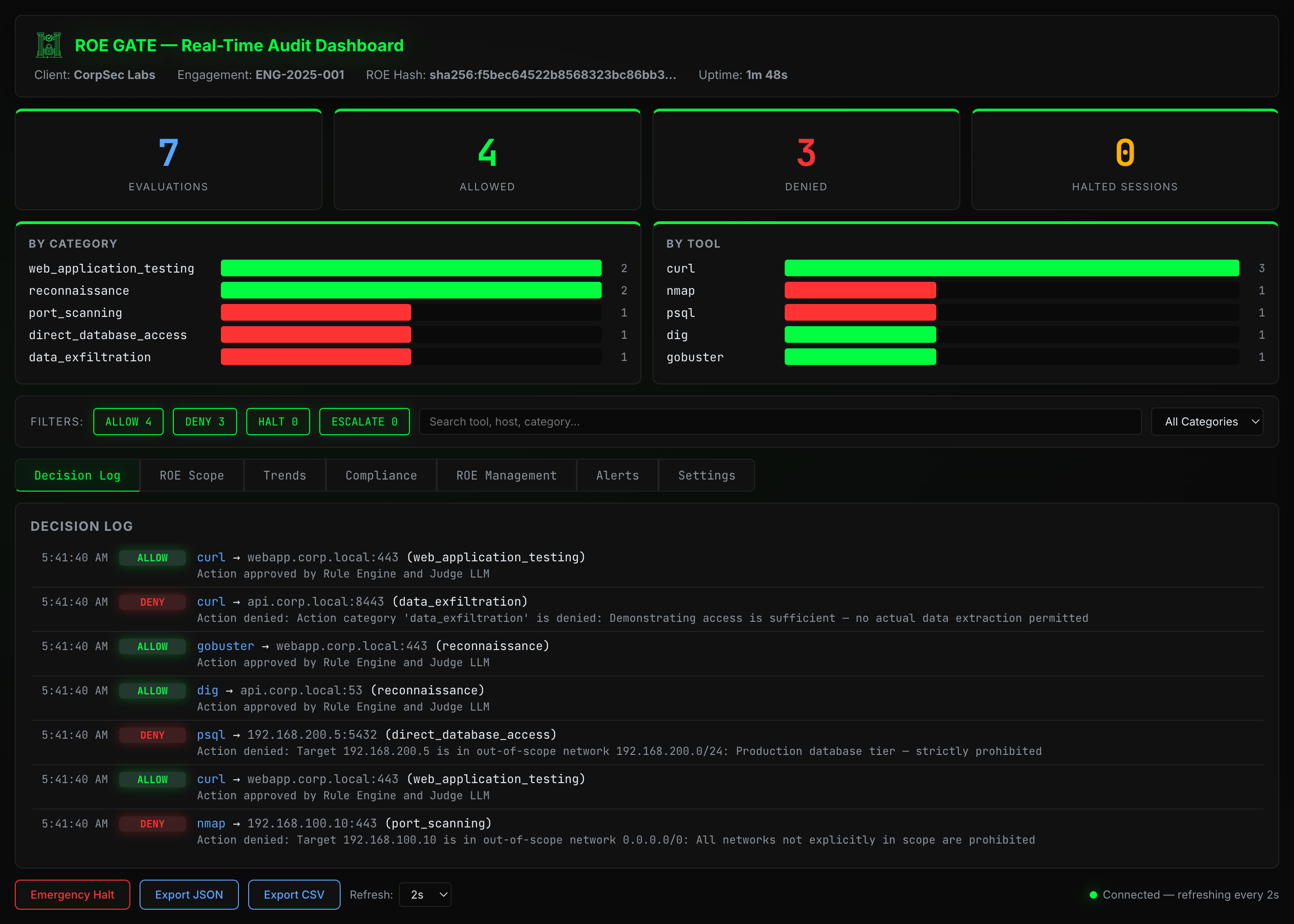

ROE Gate implements the reference monitor pattern (Anderson, 1972). Every

access to a protected resource must pass through a tamper-resistant mediator.

The gate runs as a separate process from the agent with its own

signing keys and its own evaluation context. The agent can't modify it,

bypass it, or influence its decisions.

Six components:

1. Action Intent Serializer.

Every tool call from the agent gets converted to a structured ActionIntent,

a tool-agnostic intermediate representation with the action category,

target, parameters, and impact assessment. Same policy governs any tool

regardless of syntax.

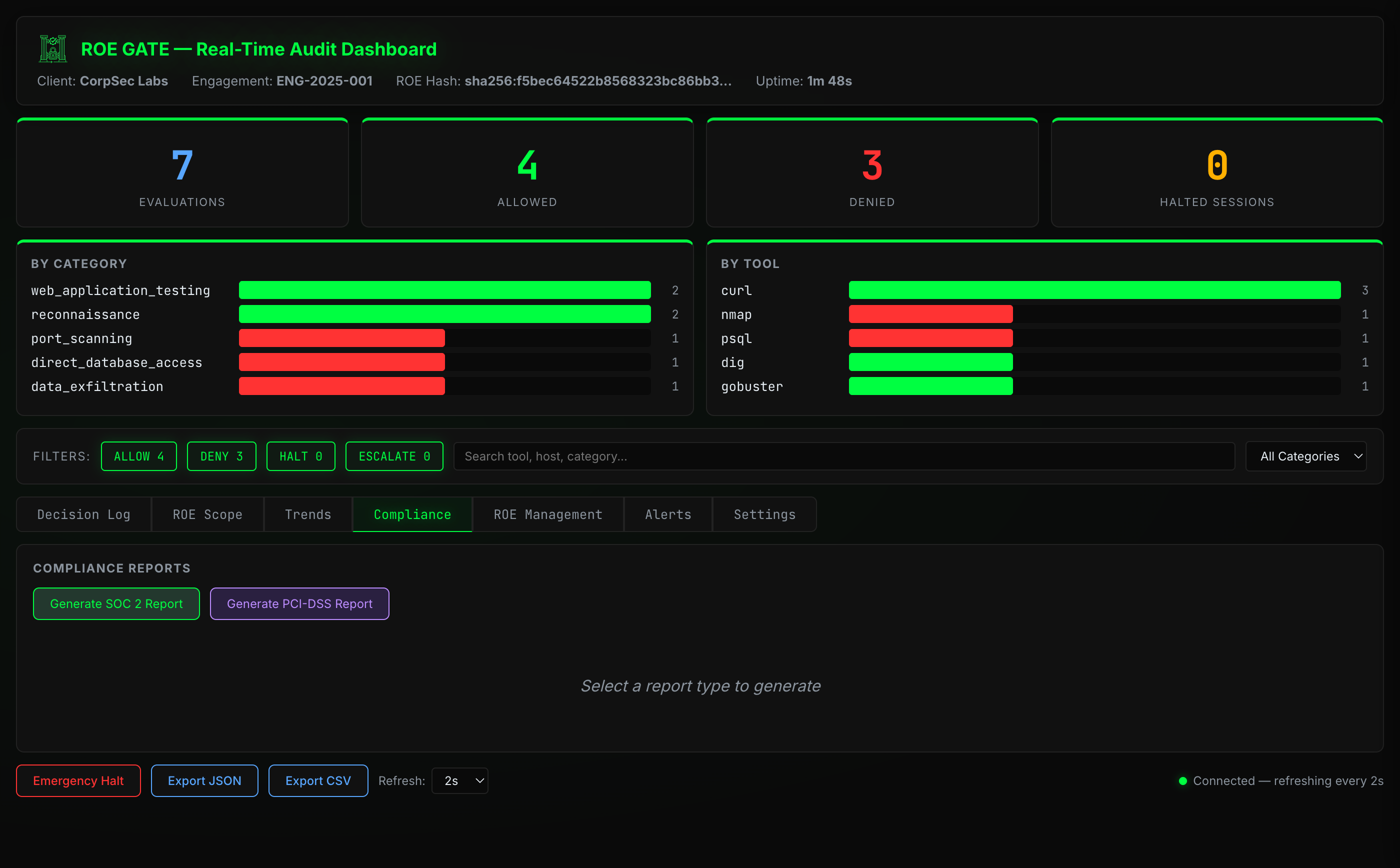

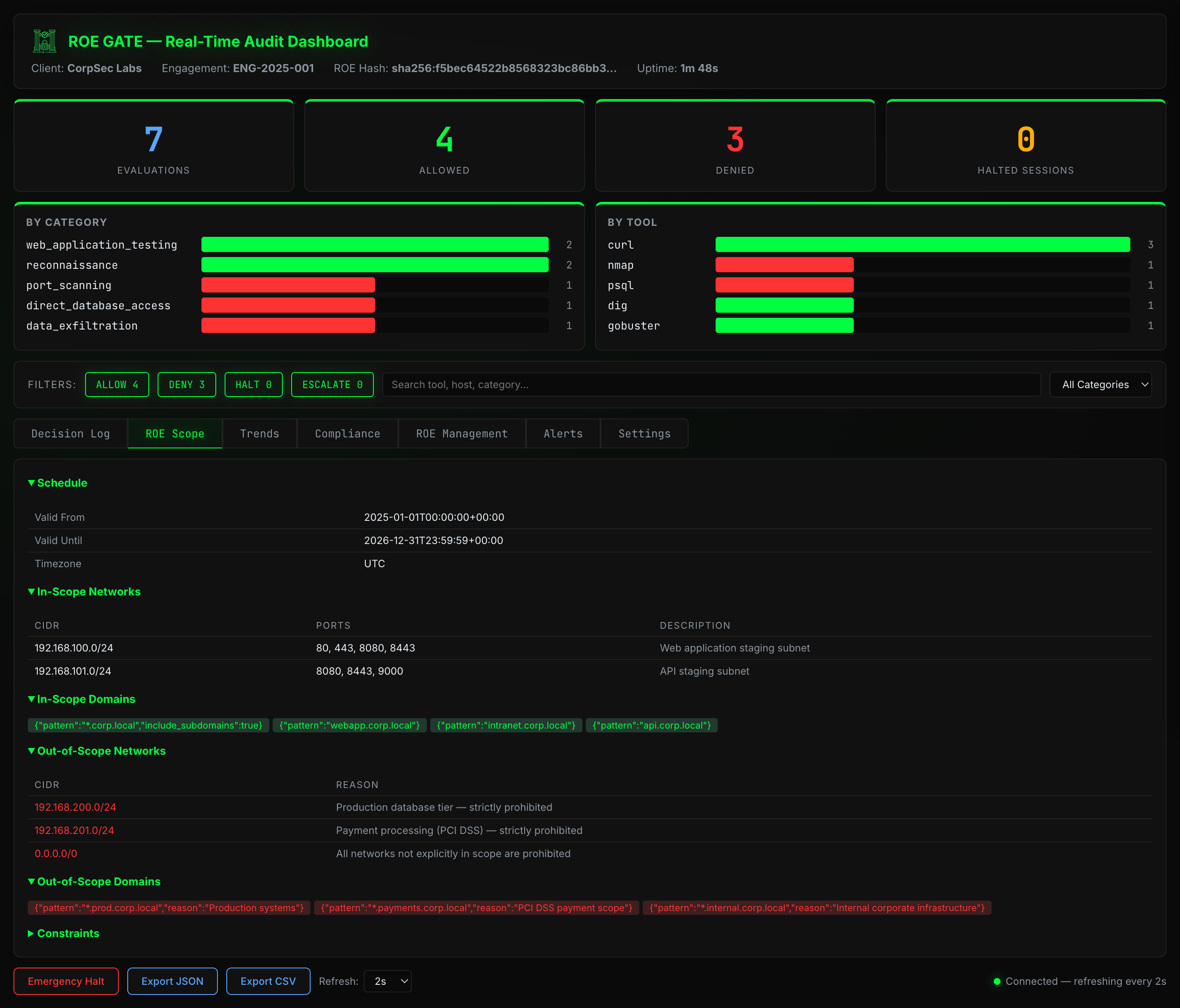

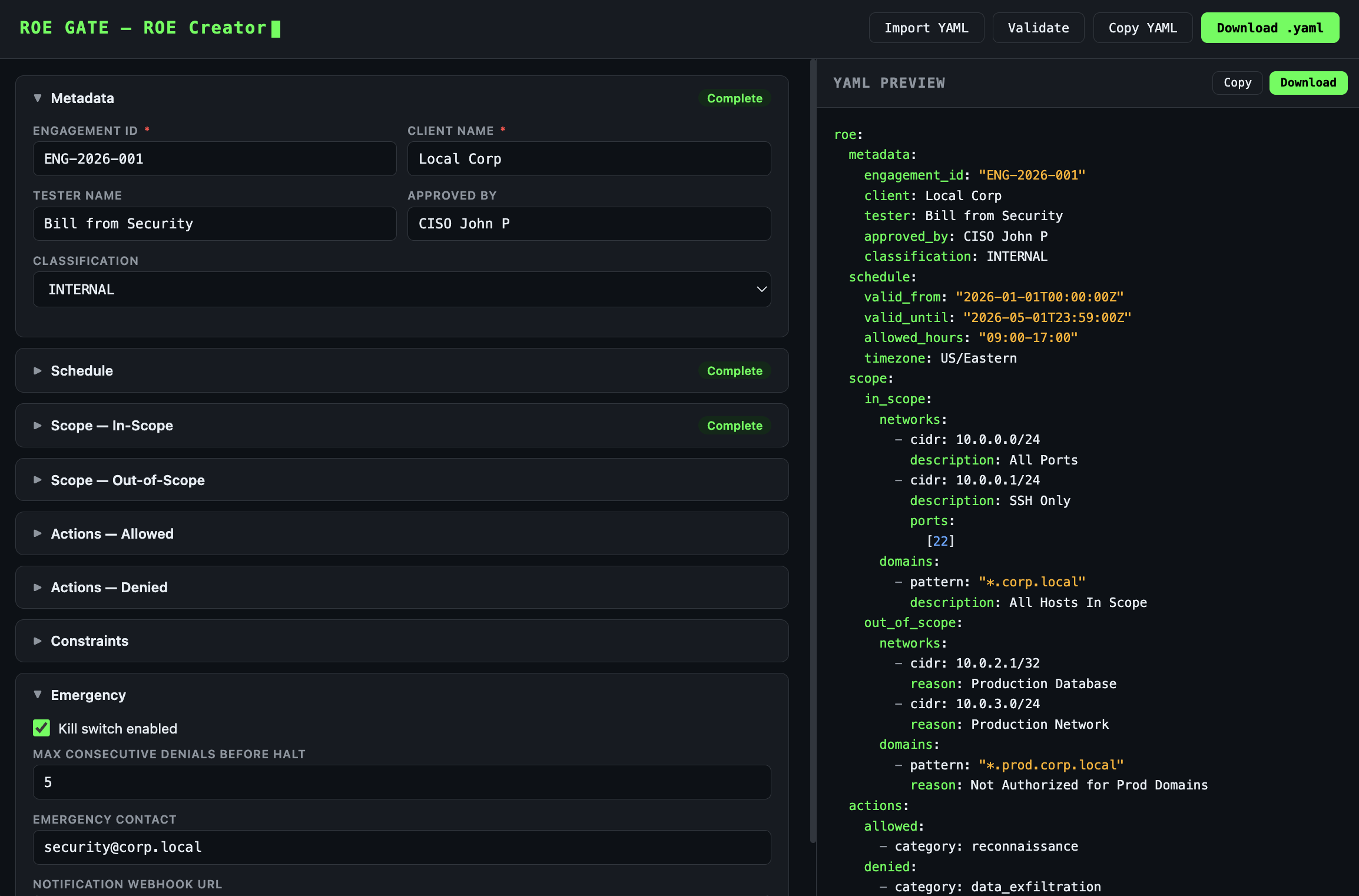

2. ROE Specification Language (ROE-SL).

A YAML-based domain-specific language for defining penetration testing

rules of engagement. Covers scope (IP ranges, domains, ports), allowed and

denied action categories, schedule windows, data handling requirements,

and emergency procedures. The ROE spec is SHA-256 hashed and bound to

every signed token.

3. Deterministic Rule Engine.

Eight evaluation checks in strict priority order provide fast, predictable

enforcement. Schedule violations, out-of-scope targets, and hard-denied

categories are rejected instantly without needing an LLM call.

4. Isolated Judge LLM.

A separate LLM instance evaluates edge cases that the deterministic engine

can't resolve. The judge only sees the action and the policy. Never the

agent's conversation context. This isolation stops prompt injection from

propagating through the evaluation chain.

5. Cryptographic Action Signer.

Approved actions receive cryptographically signed tokens (HMAC-SHA256 or

Ed25519) with 30-second TTL, single-use nonces, canonical JSON serialization,

and ROE-hash binding. Only the Gate Service holds the signing keys. The agent

never has access. Ed25519 asymmetric signing allows auditors to verify tokens

with only the public key.

6. Signature-Enforcing Tool Executor.

A verification proxy that performs six checks before executing any tool:

signature validity, token expiration, replay detection, ROE hash match,

action/token correspondence, and tool whitelist membership.

Why Not Just Use Guardrails?

Existing guardrail systems (NeMo Guardrails, Guardrails AI, etc.) operate

at the wrong layer. They filter LLM outputs, checking whether the

text the model generates is safe. ROE Gate operates at the tool execution

layer, checking whether the action the model wants to perform is authorized.

Output filtering happens after the decision. Tool-call gating happens before

execution.

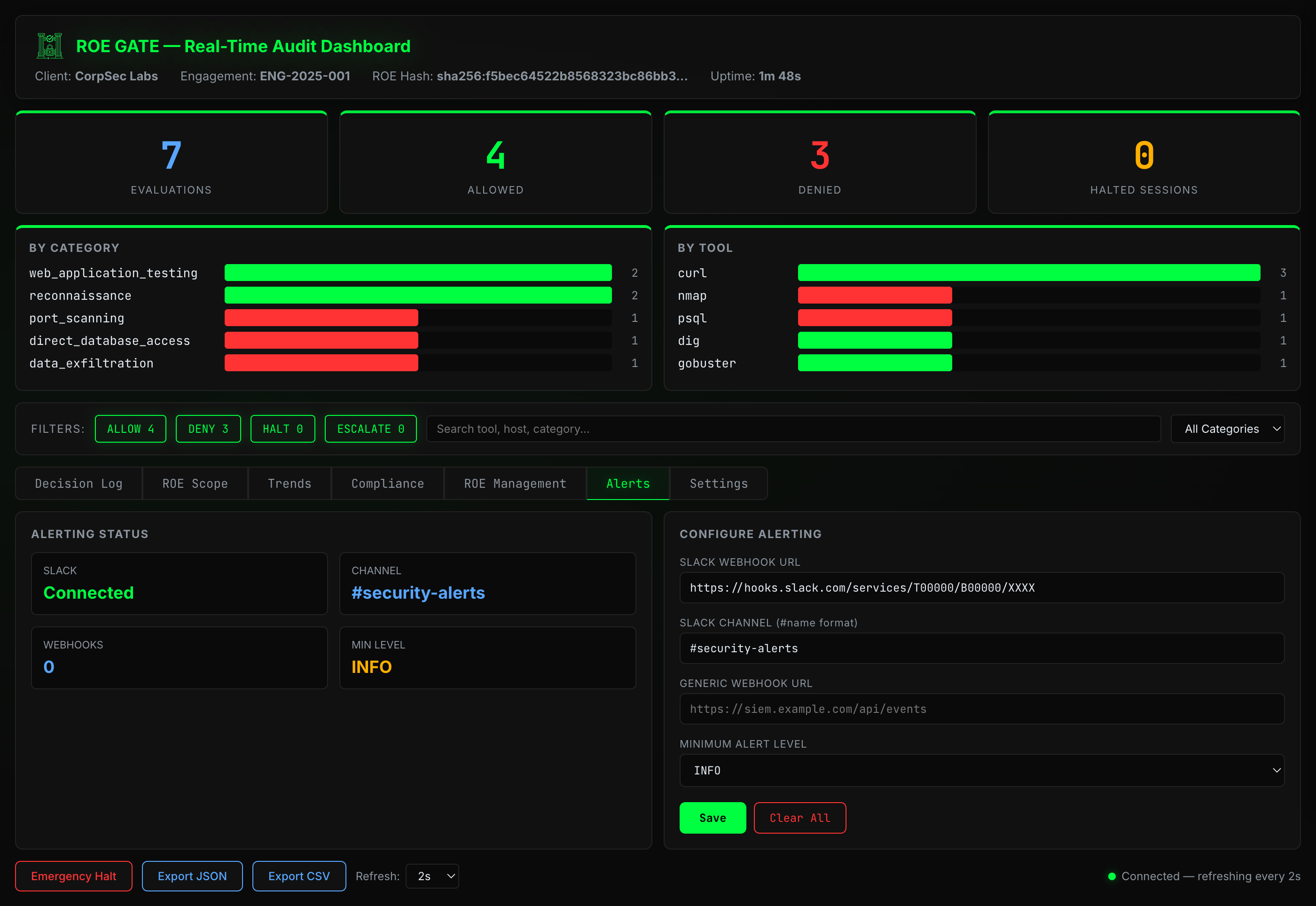

ROE Gate also provides cryptographic proof that an action was evaluated

and approved. No existing guardrail system does this. The signed token creates

a verifiable chain of custody: policy → evaluation → approval →

execution, each step cryptographically bound to the others.

Prior Art Comparison

System ROE-SL Rules Judge Crypto Audit

─────────────────────────────────────────────────────────────────────

NVIDIA NeMo Guardrails ✗ ~ ✗ ✗ ~

Guardrails AI ✗ ~ ✗ ✗ ✗

OPA / Rego ✗ ✓ ✗ ✗ ~

Constitutional AI ✗ ✗ ~ ✗ ✗

GuardAgent (Xiang 2024) ✗ ~ ✓ ✗ ~

AgentSpec (Wang, ICSE 2026)✗ ✓ ✗ ✗ ~

Pentera / XM Cyber ✗ ~ ✗ ✗ ✓

ROE Gate ✓ ✓ ✓ ✓ ✓

Key Research References

Anderson, J.P. (1972). "Computer Security Technology Planning Study." The original reference monitor definition.

Ruan et al. (2024). "ToolEmu: Identifying the Risks of LM Agents with an LM-Emulated Sandbox." ICLR 2024 Spotlight. Agents take risky actions 23.9% of the time.

Yuan et al. (2024). "R-Judge: Benchmarking Safety Risk Awareness for LLM Agents." EMNLP Findings 2024. GPT-4 achieves only 72.52% safety risk awareness (F1).

Debenedetti et al. (2024). "AgentDojo: A Dynamic Environment to Evaluate Prompt Injection Attacks and Defenses for LLM Agents." NeurIPS 2024 D&B. No prompt-based defense achieves both high utility and high security.

Zhang et al. (2025). "Agent-SafetyBench: Evaluating the Safety of LLM Agents." arXiv:2412.14470. None of 16 LLM agents achieves a safety score above 60%; defense prompts alone are insufficient.

Wang et al. (2026). "AgentSpec: Customizable Runtime Enforcement for Safe and Reliable LLM Agents." ICSE 2026. Validates the need for external runtime enforcement with formal specifications.

Dalrymple et al. (2024). "Guaranteed Safe AI via Quantitative Safety Guarantees." World model + safety spec + verifier framework that validates the ROE Gate approach.

// patent pending — U.S. App. No. 63/993,983

// patent pending — U.S. App. No. 63/993,983